In observational studies, it is often difficult to determine whether an outcome is truly caused by a specific treatment or by other underlying differences between groups. Randomized controlled trials can solve this issue by randomly assigning participants, but they are not always practical or ethical. To address this, researchers use statistical methods like propensity score matching, and one of the most widely applied techniques within it is nearest neighbor propensity score matching. This approach allows scientists to create balanced comparison groups, mimicking randomization and producing more reliable conclusions from non-random data.

Understanding Propensity Score Matching

Propensity score matching (PSM) is a statistical technique that helps estimate the effect of a treatment or intervention by accounting for the covariates that predict receiving the treatment. The idea was first introduced by Rosenbaum and Rubin in the early 1980s and has since become a cornerstone method in causal inference. The propensity score itself represents the probability that a subject receives the treatment given their observed characteristics.

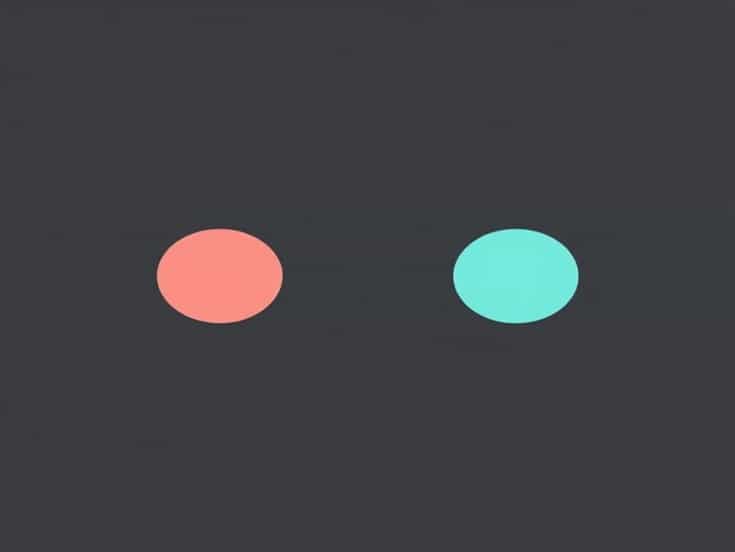

Instead of comparing treated and untreated individuals directly, which may lead to biased results, researchers use propensity scores to match individuals with similar characteristics across the two groups. This helps ensure that the only major difference between matched pairs is the treatment status, not background variables such as age, gender, or income level.

What Is Nearest Neighbor Matching?

Nearest neighbor matching is a specific algorithm used within propensity score matching. It works by pairing each treated unit with one or more untreated units that have the closest propensity score. The closeness, or distance, between scores is usually measured using absolute differences. This technique simplifies the matching process and ensures that each treated individual is compared with a control subject who is most similar based on the estimated likelihood of receiving treatment.

Basic Concept

Suppose we have two groups in a study a treatment group that received an intervention and a control group that did not. Each participant’s propensity score is calculated using a logistic regression model or another suitable statistical method. After that, for every treated participant, the algorithm searches for the nearest neighbor in the control group with the smallest distance in propensity scores. This creates pairs or sets that are as comparable as possible.

Matching Ratios

Nearest neighbor matching can be performed using different ratios. The most common options include

- 11 MatchingEach treated individual is matched with one control individual. This produces a balanced dataset but may reduce sample size.

- 1k MatchingEach treated subject is matched with several controls (e.g., 12 or 13). This helps increase precision but can reduce matching quality if controls are too dissimilar.

- With or Without ReplacementIn matching with replacement, a control unit can be used more than once for multiple treated individuals. Without replacement, each control unit can only be matched once, ensuring unique pairs.

Steps in Nearest Neighbor Propensity Score Matching

The process of implementing nearest neighbor matching generally follows a systematic set of steps. While software like R, Stata, or Python can automate this, understanding the underlying procedure helps in interpreting results correctly.

Step 1 Estimate the Propensity Score

The first step involves estimating the probability that each subject receives the treatment. Logistic regression is often used, with treatment as the dependent variable and relevant covariates as predictors. The resulting propensity score ranges between 0 and 1.

Step 2 Match Participants

Using the calculated propensity scores, each treated participant is matched to one or more control participants based on the closest score. A caliper or tolerance level can be set to restrict matches that are too far apart. For example, if the caliper is 0.05, a treated and control unit must have propensity scores within 0.05 of each other to be matched.

Step 3 Assess Matching Quality

After matching, it is crucial to evaluate how well the process balanced the covariates between groups. Researchers typically use standardized mean differences to compare characteristics before and after matching. A good match should show minimal imbalance across all key covariates.

Step 4 Analyze Outcomes

Once matching is completed, the outcome analysis is performed using the matched dataset. Since the treated and control groups are now comparable, differences in outcomes can be more confidently attributed to the treatment itself rather than to confounding variables.

Advantages of Nearest Neighbor Matching

Nearest neighbor matching offers several benefits, especially when applied correctly. It is one of the most intuitive and widely used matching techniques for causal inference in non-experimental research.

- Easy to ImplementThe method is conceptually simple and available in most statistical software packages.

- Transparent and InterpretableResearchers can easily explain the matching process and visualize pairings between treated and control units.

- Improves BalanceBy directly matching on propensity scores, it reduces bias caused by confounding variables.

- FlexibleThe technique can be used with various sample sizes, matching ratios, and replacement strategies.

Limitations and Potential Issues

While powerful, nearest neighbor propensity score matching is not without limitations. Several challenges can arise during implementation and interpretation.

- Loss of DataIf suitable matches are not found, some treated units may be discarded, reducing the sample size and statistical power.

- Residual BiasMatching on propensity scores only balances observed covariates. Unobserved or unmeasured variables may still bias the results.

- Choice of CaliperSelecting an appropriate caliper is crucial. Too narrow a caliper may exclude many subjects, while too wide a caliper may result in poor-quality matches.

- Dependence on Model SpecificationThe quality of the matching depends heavily on how accurately the propensity score model is specified. Omitted variables can lead to biased results.

Applications of Nearest Neighbor Propensity Score Matching

This matching technique has wide applications in fields such as economics, epidemiology, education, and social sciences. It is commonly used to estimate the effects of policies, medical treatments, and educational programs when randomization is not possible.

Examples of Practical Applications

- Evaluating the impact of a new healthcare policy on patient outcomes.

- Assessing whether job training programs improve employment rates.

- Measuring the effect of an educational intervention on student performance.

- Comparing outcomes between users and non-users of a new medication in observational studies.

In each case, nearest neighbor matching helps simulate a controlled experiment by aligning treatment and control groups on their likelihood of receiving the intervention.

Enhancements and Alternatives

Over time, several enhancements and variations of nearest neighbor matching have been developed to improve accuracy and computational efficiency. Methods such as kernel matching, caliper matching, and Mahalanobis distance matching are alternatives that may better handle specific datasets or research objectives. However, nearest neighbor matching remains popular due to its straightforward approach and compatibility with different study designs.

Nearest neighbor propensity score matching is a valuable tool for researchers seeking to estimate causal effects from observational data. By pairing treated and untreated individuals with similar characteristics, it reduces bias and improves the reliability of conclusions. Although it cannot replace randomized experiments, it provides a practical and statistically sound alternative when randomization is infeasible. When applied carefullywith proper model specification, balance checking, and sensitivity analysisit allows for meaningful and credible insights into treatment effects across various disciplines.

Understanding how and when to use nearest neighbor matching can greatly enhance the quality of observational research, ensuring that findings are both valid and scientifically robust.